This initiative appeared for the first time last year with the same name at Neurips 2020. The high level perspective paper of Open Problems in Cooperative AI presented on the website offered a general overview of the main challenges that this field presented as a rising field that include the intersection of other areas defined as AI research aiming for helping individuals, humans and machines, to find ways to improve their joint welfare. It connects clusters of research such as multi-agent AI, game theory and strategic interaction.

It will evolve in terms of the dimensions of cooperative opportunities, and the paper posted three main dimensions: Understanding of other agents, their beliefs, incentives and capabilities. Communication between agents, including building a shared language and overcoming mistrust and deception and Constructing cooperative commitments, so as to overcome incentive to renege on a cooperative arrangement.

This year, a wide range of applications appeared, from banking to peer-review, autonomous driving or even fake-news domain

Today we will take a deep dive into some of the hints coming from the poster session; we would like to thank the authors for their work and efforts, and the organizers for putting all these together. You can find all the papers listed here.

- Towards Incorporating Rich Social Interactions Into MDPs

Authors: Ravi Tejwani et al.

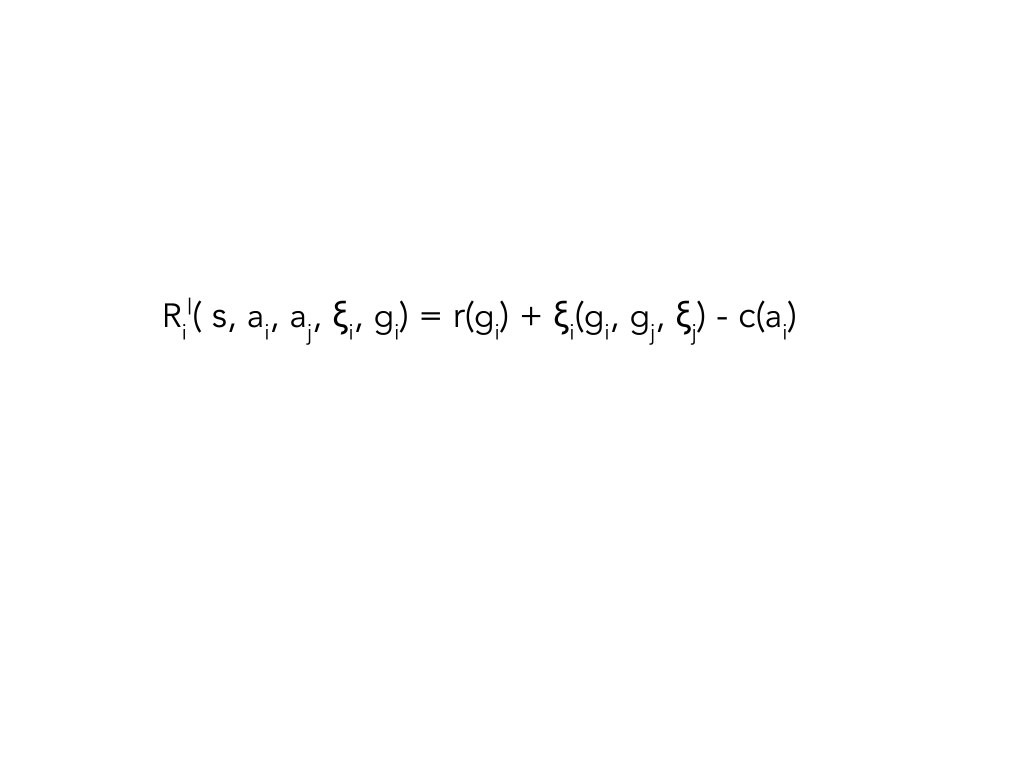

The interesting research idea coming from this paper aims to extend a nested MDP (Markov Decision Processes coming from Reinforcement Learning), proposing a SOCIAL MDP, where agents reason about other’s arbitrary function rewards. They estimate a linear reward function for each agent at each time step. It introduced in the well know tuple two main terms : ξ (estimated reward for other agents at each timestep not at the end of each episode) and g (it can be another goal).

Fig1. Decomposition of each agent´s reward at each timestep into a reward coming from the goal, reward coming from the social goal ( depending of each agent´s goal and ξ ) minus the cost of action.

- Ambiguity Can Compensate for Semantic Differences in Human-AI communication

Authors: Ozgecan Kocak, Sanghyun Park, Phanish Puraman

This paper treats ambiguity and semantic differences as communication difficulties between a human and AI . The main idea is to decouple the semantic structure of the word and give importance to the stimuli ( what the agents are seeing ) and measure ambiguity through a delta normalized parameter . It measures the semantic difference having a normalized parameter δ within a matrix structure.

A key point of the algorithm is to iterate until agent codes (language) converge, it means they understand each other. An interesting point they describe in the paper is that agents with less ambiguous language need longer periods of unlearning to lower their prior associations before exploring associations to find a match with the other agent.

- Promoting Resilience in Multi-Agent Reinforcement Learning via Confusion-Based Communication

Authors: Ozgecan Kocak, Sanghyun Park, Phanish Puraman

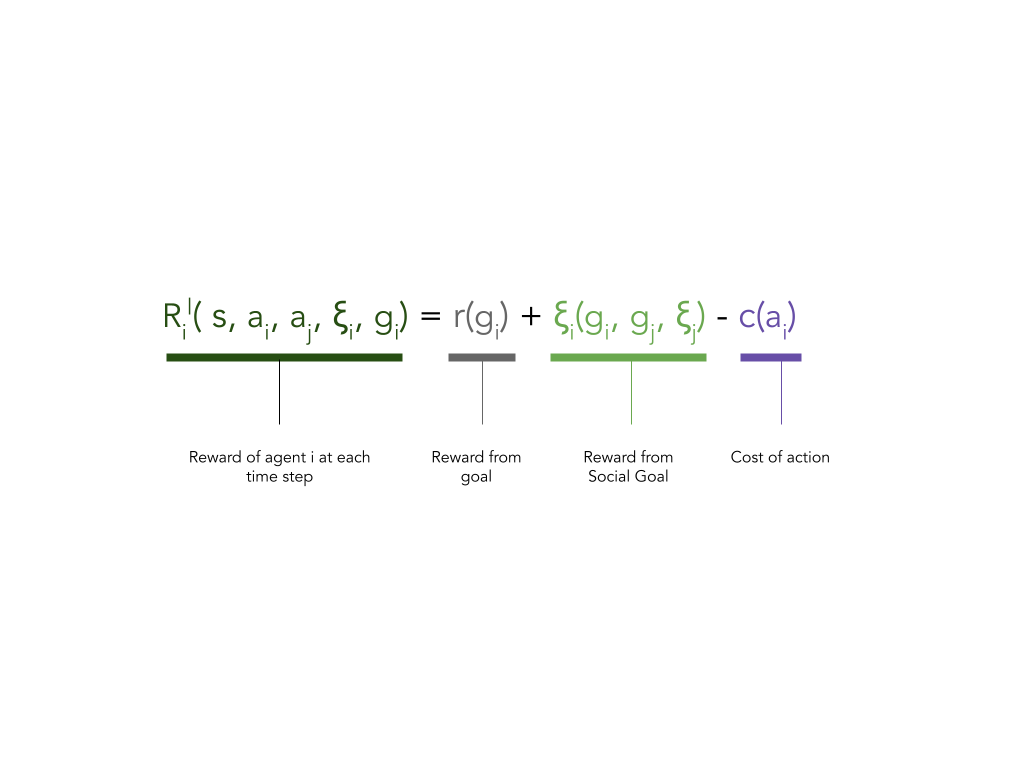

Part of a larger agenda, exploring the key concept of cooperation and adaptation to perturbation (resilience). In their paper, they explore an innovative manner to measure group resilience based on distance between original MDP M and modified MDP M’ and defined on how to collaborate effectively, maintaining performance while adapting to perturbation.

In order to achieve collaboration between agents they introduce the confusion-based communication which gets a better performance improving the group’s resilience. In these messages they send what they defined as confusion level. This measure they have to minimize which means that they are being resilience.

Fig2. The level of confusion of agent p at si after taking action ai, denoted Jsi,ai

- Locality Matters : A scalable value decomposition approach for multi-agent reinforcement learning.

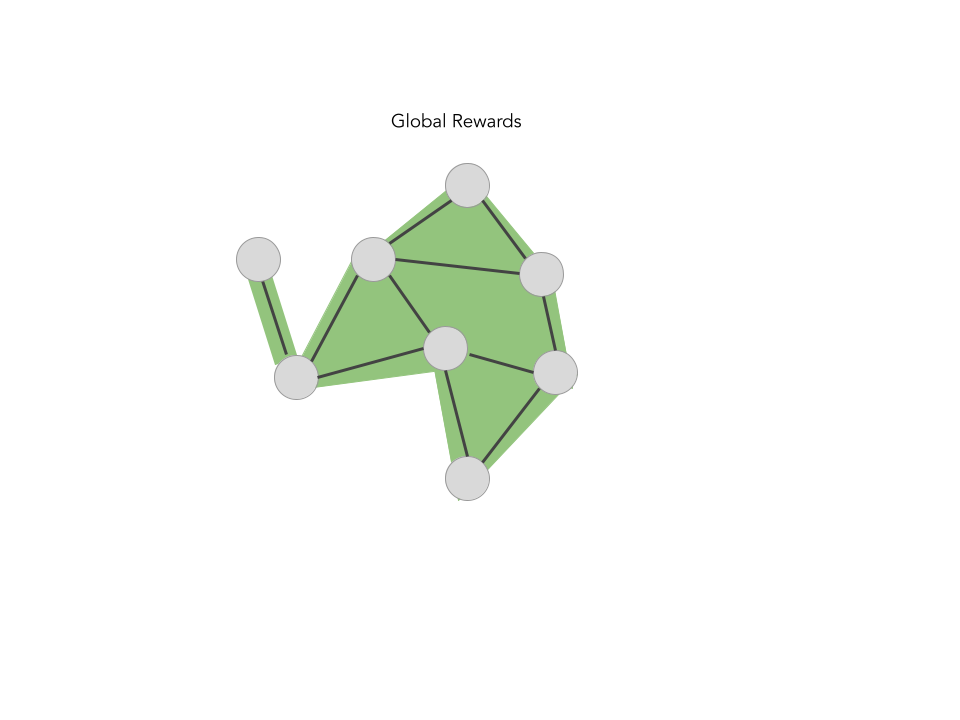

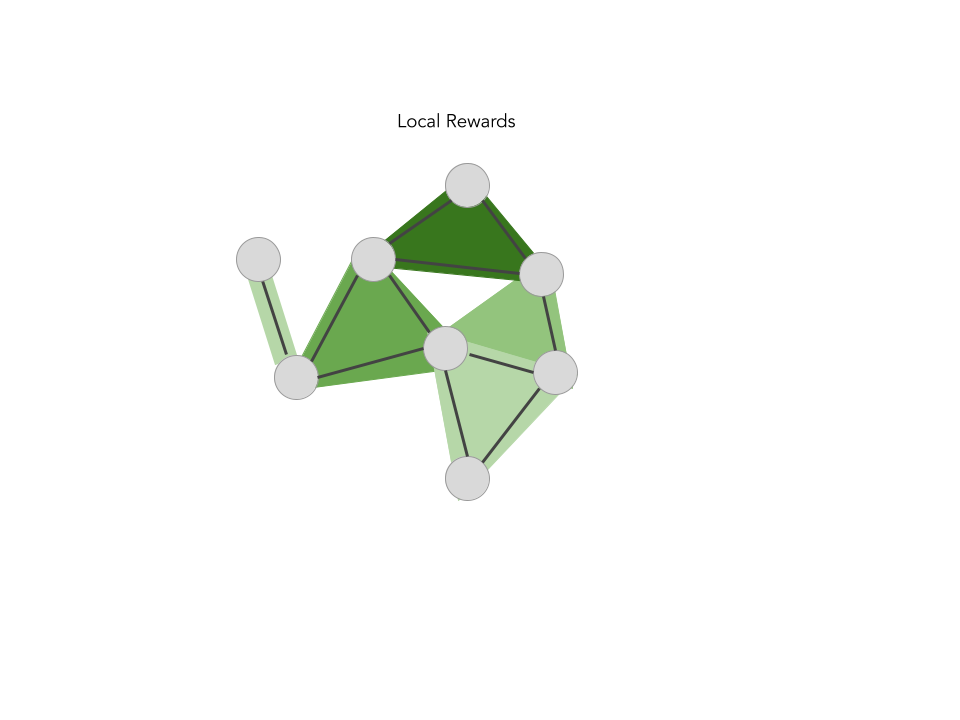

This paper explores the experiments of Decoupling the idea of global reward for cooperation into local rewards, with a graph-based structure. Besides, they propose the LOMAQ algorithm , heavily based on an iteration of a well known Q-mix. One of the main ideas is that the Q-function can be maximized using a partition of partial maximizers.

Another interesting point that they proposed is, as we mentioned before, a method for decomposing the global reward function into local reward functions using a deep neural network.

Fig 3. A visualization of training MARL for a graph of 8 agents . The colored regions represent the feedback that the agents exhibit during training

- Disinformation, Stochastic Harm, and Costly Effort: A Principal-Agent Analysis of regulating social Media Platforms

Interesting exploration of social media platforms in case of use for misinformation in which it is defined as a MDP with Social platform as the agent and the presence of the Regulator as an entity with the purpose of maximizing social-welfare . This regulator figure presence reminds of the institution figure posted in the Open Problems paper.

One of the interesting key concepts here is the introduction of the metric e as the effort and the reward as c(e) cost of the effort, with the overall goal of minimizing the cost of the given effort by the platform. They also explore other concepts such as harm as a function.

- Multi-lingual agents through multi-headed neural networks

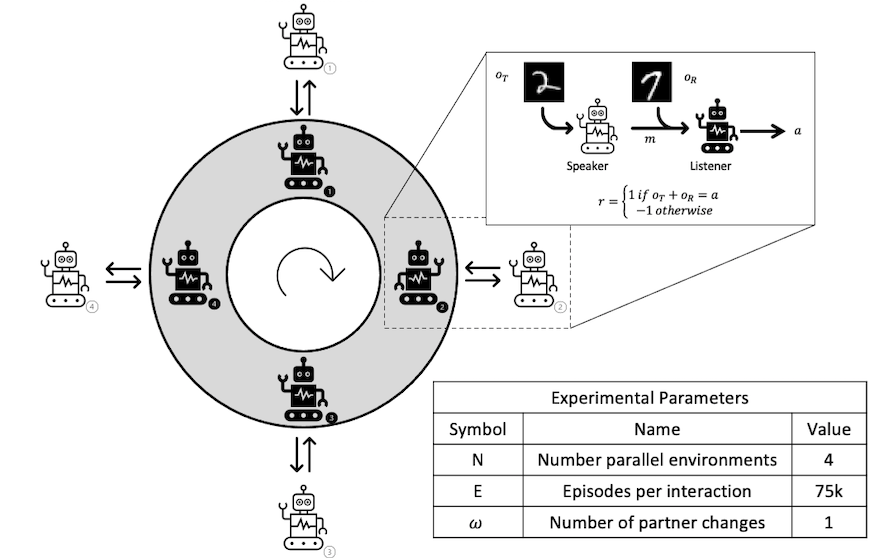

This paper explores the idea of catastrophic forgetting during communication in agents. The poster is very neat and introduces an interesting Communication Carousel game in which, during training and after E episodes, a rotative protocol is executed to reinforce the message of one agent to other partners in order to avoid catastrophic forgetting.

Fig 4. Communication Carousel. Illustration of the N-paralel referential games. After E episodes, the carousel rotates, and all agents interact with a different partner. This continues for the desired number of rotations after which all agents are returned to their original partner for assessment of emergent language maintenance.

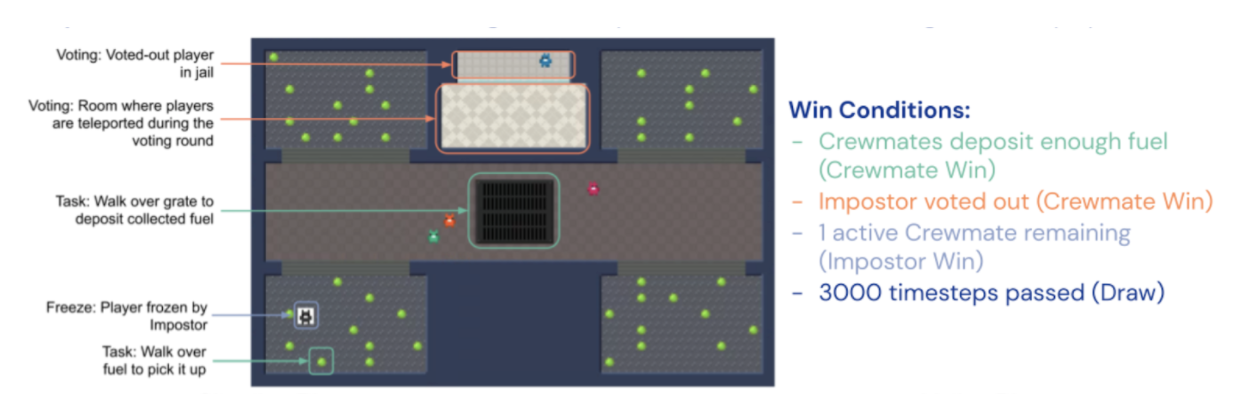

- Hidden Agenda: a Social Deduction Game with Diverse Learned Equilibria

They present Hidden Agenda, a two-team social deduction game that provides a 2D environment for studying learning agents in scenarios of unknown team alignment strategies. The environment admits a rich set of strategies for both teams.

The play is based on two teams, the first one is made up of four crewmates (numerical advantage) and one impostor (information advantage, known all players’s roles) and it has the following actions and rewards:

Fig 5. In the hidden agenda environment, both teams share the same action space divided into Situation and Voting : in the situation action space, they are allowed to move in 4 cardinal directions and turn. In the case of the impostor, it can also fire a freeze beam. In the voting phase, all players vote in order to discover who the impostor is. The Reward space goes both for a team-based reward at the end of each episode and an agent reward for completing certain tasks.

Even though Cooperative AI has taken part as a Neurips workshop, it takes part of a broader initiative to push de boundaries and progress of Cooperative Agents in their different forms, aiming to build the infrastructure of the field. To know more about their foundations, don´t hesitate to visit their website.