Table of Contents

Last October, I had the opportunity to attend the Global Software Architecture Summit, an event organized by Apiumhub in Barcelona. One of the talks I enjoyed the most during the event was “Metrics for Architects” by Alexander von Zitzewitz, founder and CEO of hello2morrow. During his presentation, Alexander shared several metrics for architects that should be taken into account as a project progresses to prevent the development of spaghetti code and ensure the final product is easy to maintain and free of bugs.

Why should you use metrics for architects?

These are some of the points Alexander went through during his talk Metrics for Architects:

• Metrics are the foundation of the crucial “verify/measure” node of the continuous improvement loop. What it keeps measured, can be improved.

• Free tools make it easy to measure them

• Automated measurement in CI builds allows you to discover harmful trends early enough (and break the build)

• You can enforce quality standards by using metrics in quality gates

• Some metrics are excellent architectural fitness functions

If these reasons are not enough, you can read a report from The Evolving Enterprise.

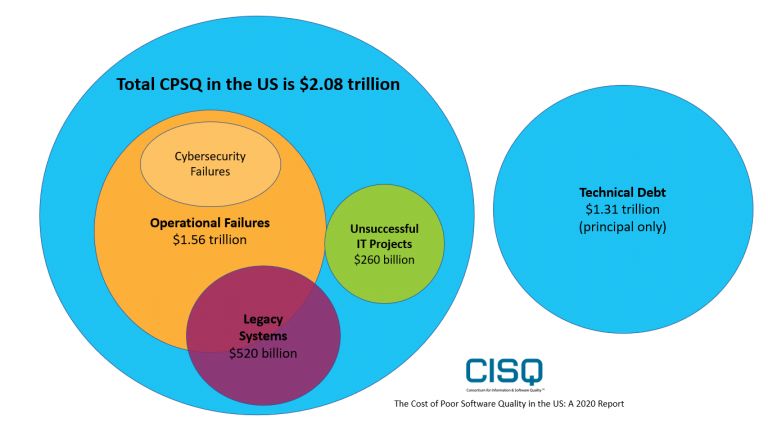

Poor software quality cost the US $2.08trn in 2020.

The report I mentioned above talks about how low-quality software costs a lot of money. Some relevant findings are:

- Failed projects cost 260 Billion USD, up 46% from 2018

- Legacy system problems contributed to around $520 billion

This report also gives some recommendations:

- Ensure early and regular analysis of source code to detect violations, weaknesses, and vulnerabilities.

- Measure structural quality characteristics.

- Recognize the inherent difficulties of developing software and use effective tools to help deal with those difficulties.

Why is nobody using metrics for architects then?

The report is clear: code should be analyzed in order to find issues. Aren’t we aware of the existing toolings? Or we may not know which metrics are appropriate to employ, given the numerous options available. Furthermore, it’s crucial to note that a single metric alone is insufficient for obtaining accurate results; a combination of metrics may be necessary.

How to measure spaghettization of code?

What are the characteristics of the spaghetti code? The code has high coupling, too many circular dependencies, there is no clear separation of responsibilities, features are spread all over the place and sometimes we may find some duplications.

If we compare a spaghetti code with some clean code, we can see that having a good base of code may reduce dramatically the cost, the time, and the quality of a project.

| Spaghetti code | Clean code |

| Much reduced team velocity | Improved developer productivity |

| Frequent regression bugs | Lower risk |

| Hard to maintain, test and understand | Easier to maintain, test and understand |

| Modularization is impossible | Much lower cost of change |

Tools for metric gathering

• Understand (commercial) scitools.com

• NDepend (commercial, .Net) ndepend.com

• Sonargraph-Explorer (free) hello2morrow.com

• SourceMonitor (free) https://www.derpaul.net/SourceMonitor/

Good metrics for architects to measure structural decay

Let’s check some good metrics that may help us to avoid the deterioration of our project:

- ACD (Average Component Dependency) –measures coupling

- This is A Coupling Metric.

- It gives us the average number of direct and indirect dependencies

- Relative Cyclicity – focus on cyclic dependencies

- Structural Debt Index – improved analysis of cyclic dependencies

- Maintainability Level – measures coupling, verticalization, and cycles

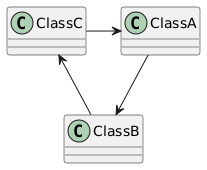

Cyclic dependencies

This happens when class A depends on B, B on C, and C again on A.

Why are cyclic dependencies bad?

- Testing becomes harder

- Modularization becomes more difficult

- Coupling increases

- Cycle groups with more than 5 elements should be avoided

- Namespace / Package cycles are especially bad and should break the build

In the end, we should be able to break all cycles.

Metrics for Cyclic Dependencies:

- Number of cycle groups / biggest cycle group size

- Cyclicity / Relative Cyclicity

- Structural Debt Index

Cyclicity

The cyclicity of a cycle group is the square of its size, e.g. a group with 3 elements has a cyclicity of 9. System/module cyclicity is the sum of all cycle group cyclicity values. The bigger the number is showing that a lot of classes are involved in a cycle, which can be much harder to break.

Structural Debt Index

This metric focuses on cyclic coupling and how difficult it would be to break the cycles

Cyclic dependencies are a good indicator of structural erosion

For each cycle group, two values are computed:

How many links do I have to cut to break the cycle group

Total number of code lines affected by the links to break

SDI = 10 * LinksToBreak + TotalAffectedLines

SDI is then added up for modules and the whole system

Can be computed on the component level and on the package/namespace level

Maintainability level

Experimental metric in Sonargraph

Implemented as a percentage: 100% means no coupling

Should be stable, when there are no major changes to architecture and design

Measure decoupling and successful verticalization

Reducing coupling and cyclicity will improve the metric

One of several indicators of design quality

Recommended value: 75% or more

Cyclomatic Complexity

Recommended threshold: CCN <= 15

- Average cyclomatic complexity

- Can be calculated on classes, packages/namespaces, or modules

- The weighted average of cyclomatic complexity values of methods/classes.

- Use “number of statements” as weights

- Max Indentation Depth

- A very good indicator of complexity

- A sequence of conditional statements or loops is often less complex than a deeply nested conglomeration of loops and conditional statements

- Recommend threshold: MID <= 4

- LCOM 4

- Introduced by Chidamber & Kemerer.

- Calculates the number of cohesive groups within a class.

- A cohesive group consists of fields and methods interacting with each other.

- Overridden methods and constructors are ignored.

- A value of 1 means perfect cohesion

- Greater values mean the class can be split up into several classes.

- Does not always work well in inheritance hierarchies. Works best with classes not derived from other classes except ‘Object’.

Architecture Metrics by Robert C. Martin

To finalize the talk, some Uncle Bob metrics were commented:

- Instability (I)

- This measure is employed to evaluate the extent to which a class is vulnerable to alterations.

- Di = Number of incoming dependencies

- Do = Number of outgoing dependencies

- Instability I = Do / (Di+Do)

- Abstractness

- Abstractness is determined by dividing the total number of abstract classes within a package by the overall number of classes in that package.

- Nc = Total number of types in a type container

- Na = Number of abstract classes and interfaces in a type container

- Abstractness A = Na/Nc

- Distance

- a metric that quantifies the equilibrium between stability and abstractness

- D=A + I – 1

- Value range [-1 .. +1]

- Negative values are in the “Zone of pain”

- Positive values belong to the “Zone of uselessness”

- Good values are close to zero (e.g. -0,25 to +0,25)

Conclusion: Metrics for Architects Talk

The main focus of this GSAS edition was metrics and this talk by Alexander von Zitzewitz was probably where more metrics were shown. Alexander presented several metrics that, when used collectively, can aid in assessing the quality of a project and identifying areas that require improvement. It is surprising, however, that despite their potential value, metrics often do not receive sufficient attention. This may be one of the reasons why, as the report suggests, many projects fail.

Are you interested in attending GSAS? This year’s edition will focus on modern practices in software architecture: how to be more effective, efficient and enjoy what you do. Industry leaders like Mark Richards, Neal Ford, Jacqui Read, Diana Montalion, Sandro Mancuso, Eoin Woods, and many more will be there to share their knowledge and expertise with attendees. Regular tickets are already on sale, don’t miss the opportunity to attend. Get your tickets here.

Author

-

Experienced Full Stack Engineer with a demonstrated history of working in the information technology and services industry. Skilled in PHP, Spring Boot, Java, Kotlin, Domain-Driven Design (DDD), TDD and Front-end Development. Strong engineering professional with a Engineer's degree focused in Computer Engineering from Universitat Oberta de Catalunya (UOC).

View all posts