Table of Contents

Prompt engineering is a vital aspect of leveraging the full potential of AI language models. By refining and optimizing the instructions given to these models, we can obtain more accurate and contextually relevant responses. In this article, we explore the principles and techniques of prompt engineering, along with its limitations and potential applications.

Principles of Prompt Engineering

1. Writing Clear and Specific Instructions: The success of prompt engineering begins with providing clear and unambiguous instructions. Clear doesn’t necessarily mean a short description. Being specific about the desired output helps the model understand the task more accurately. For example, tell the LLA to be an expert in the field you are asking for.

2. Utilizing Delimiters and Structured Formats: Employing delimiters, such as triple quotes, can prevent prompt injections, ensuring that the AI model focuses solely on the intended task. Structured formats for the response, like JSON or XML, help guide the model effectively.

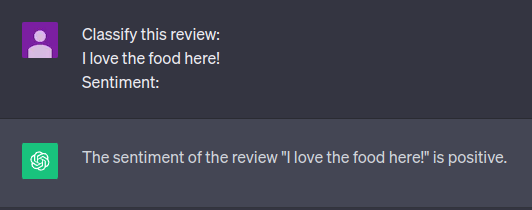

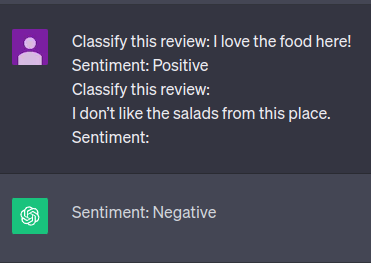

3. Few-shot and One-shot Inference Techniques: Utilizing one-shot or few-shot inference techniques allows AI models to learn from a limited number of examples, making them more versatile in generating relevant responses. The idea is to give successful examples of completing tasks and then ask the model to perform the task.

- Zero-shot inference: there is no example; we ask for a response directly

- One-shot inference: we show the IA an example of how it should be answered.

4. Allow Time for Model Deliberation: Give the model the necessary time to contemplate the task at hand thoroughly.

- Tactic 1: Specify Task Steps: Clearly outline the steps required to accomplish the task, providing the model with structured guidance.

- Tactic 2: Encourage Independent Problem Solving: Instruct the model to independently deduce a solution before arriving at a hasty conclusion. This technique is called Chain-of-Thought Prompting with Reasoning Steps.

- Present a problem: Begin by presenting a specific problem or question.

- Request Initial Model Calculation: Ask the AI to perform an initial calculation or reasoning step.

- Compare User and Model Responses: Finally, evaluate the user’s response by comparing it with the AI’s initial output to determine its correctness.

This approach ensures thorough problem-solving and enhances the model’s performance.

5. Problem-solving using Iterative Prompt Development: By analyzing model responses and refining prompts iteratively, we can achieve more desired outputs effectively.

Model Limitations and Solutions

1. Hallucinations and Dealing with Plausible but False Statements: Sometimes, AI models generate responses that sound plausible but are factually incorrect. To address this, relevant information should be provided first, and responses must be based on this information.

2. Handling Outdated Information: Systems are trained until a specific date, so information about dates or people may not be accurate.

3. Complex mathematical operations: AI models may provide approximate results when asked to perform complex calculations. Providing specific instructions to perform precise mathematical operations can mitigate this issue.

4. Utilizing Temperature Parameter for Controlled Output: By adjusting the temperature parameter, we can influence the level of randomness in the model’s output, producing either more focused or more creative responses.

Applications of Prompt Engineering

1. Summarizing Texts: By instructing AI models to generate concise summaries of texts, we can efficiently extract important information from lengthy documents.

2. Inferring Sentiments and Emotions: Prompt engineering enables AI models to accurately identify sentiments and emotions expressed in texts.

3. Transforming Text Formats: AI models can translate, change tones, and convert text formats, facilitating versatile applications.

4. Expanding Text Content: AI models can be instructed to expand upon specific topics or complete stories based on the provided context.

Ensuring Safe and Reliable Outputs

1. Moderation and Checking for Harmful Content: AI model responses should be checked for potentially harmful content to ensure responsible and ethical use.

2. Fact-Checking and Ensuring Accuracy: Checking AI-generated responses against factual information prevents the dissemination of false or misleading data.

3. Evaluating Model Responses Using Rubrics and Expert Feedback: Using rubrics and expert feedback enables the model to learn and improve its responses continuously.

Conclusion

Effective prompt engineering is a powerful tool that unlocks the true potential of AI language models. By following the principles and techniques outlined in this article, we can harness AI’s capabilities responsibly and achieve more accurate and contextually relevant results. Continuous learning and improvement in prompt engineering will undoubtedly shape the future of AI technology and its applications in various domains.

Author

-

Experienced Full Stack Engineer with a demonstrated history of working in the information technology and services industry. Skilled in PHP, Spring Boot, Java, Kotlin, Domain-Driven Design (DDD), TDD and Front-end Development. Strong engineering professional with a Engineer's degree focused in Computer Engineering from Universitat Oberta de Catalunya (UOC).

View all posts